Forty percent of consumers find the ads they see irrelevant. That number comes from Bain & Company's 2024 research and points directly to a structural failure in how enterprise brands produce and test creative. The problem is that most organizations build creative without a testing architecture that could tell them what is working, for whom, and why.

For enterprise marketing leaders managing $10M+ in ad spend, creative quality is the highest-impact variable in the entire media plan. Kantar's analysis, matching its Link database of over 250,000 ads with WARC's ROI database, found that the most creative and effective ads generate more than four times the profit of average creative. A 4x profitability multiplier is embedded in the creative decision, and most enterprise teams are not systematically testing for it.

Why Enterprise Creative Production Is Broken for Brand Advertising

The traditional agency workflow moves in a single direction: brief, concept, production, flight, and post-campaign report. Forrester's Total Economic Impact study of enterprise creative operations found that a composite $10B organization running 300 campaigns per year needs over 550,000 assets annually. Under legacy production models, revision and approval cycles alone consumed up to 75% of elapsed production time.

The output of this process reaches consumers who increasingly reject it. The same Bain research found that nearly 45% of consumers do not mind ads if they are relevant, and 40% say well-executed ads actively help their shopping. The production process that prevents precision targeting and continuous refinement for creative is the problem.

Large enterprises frequently manage six to twenty brand variants across campaigns, with no systematic way to test which ones drive incremental lift. Performance marketers inherit brand creative designed for awareness and then try to optimize it with tools built for direct response. The measurement frameworks do not connect. The creative brief does not specify the persona. The production cycle takes so long that by the time results accumulate, the next campaign is already in production.

Bain's analysis found that AI-powered personalization in retail can cut content creation from weeks to hours, revealing the scale of inefficiency baked into legacy workflows. That time compression matters because speed-to-insight is what separates brands that compound creative learning from brands that repeat expensive mistakes on an 18-month lag.

The Six Creative Levers That Actually Determine Brand Advertising Outcomes

Most enterprise A/B testing programs test surface variables like button color, headline punctuation, and hero image crop. These are not creative levers. Six variables actually determine whether brand advertising drives business outcomes:

Value proposition: What claim are you making, and is it distinct from category norms?

CTA: Are you asking for behavior appropriate to the buyer's stage?

Emotional theme: What feeling does this creative create, and does it match the persona's decision context?

Messaging: What specific language carries the main idea?

People and talent: Who appears, and do they build recognition and trust for this audience?

Art and imagery: What visual system signals brand identity and category relevance?

System1's research on brand consistency, conducted with the IPA across over 4,000 ads over five years, confirms that emotional distinctiveness within a category drives outsized business results. Dove's #ChangeTheCompliment campaign outperformed its category average by 3.1 points specifically because it tested a different emotional theme than the functional, product-focused messaging that dominated the personal care space.

Each lever maps differently to the persona and funnel stage. A creative variant built to drive brand recall for a high-income fitness persona requires a different emotional theme and messaging than one designed to shift a mid-funnel buyer from consideration to purchase intent. Byron Sharp's foundational work on brand growth identifies distinctive sensory cues as core memory structures that make brands easier to notice and easier to buy. Testing art, imagery, and talent variables is not aesthetic work; it is testing against a primary driver of mental availability.

The performance gap between creative variants that test these six levers and those that do not is not marginal. Top-performing creative variants routinely exceed average variants by 300% or more in downstream conversion impact, which makes brand advertising creative testing the highest-impact optimization available to any enterprise marketing team.

How Continuous Creative Testing Changes the Production Workflow for Enterprise Teams

The structural shift from batch testing to continuous testing changes the production workflow at every stage. The old sequence involved a brief, concept, production, flight, and report. The new sequence uses a hypothesis, a lightweight variant, a live experiment, real-time optimization, and scaled production of proven winners only.

This reordering has a direct financial consequence. When only proven creative variants receive expensive broadcast or CTV production treatment, enterprises stop paying full production costs for concepts that would have underperformed. The budget that was absorbing failed creative experiments gets redirected toward scaling what works.

Cross-channel creative testing introduces a specific technical requirement that most enterprise teams underestimate. To isolate the creative variable from the channel variable, the same persona must be reached on CTV, native, audio, and display semi-simultaneously. Without a unified audience across channels, what looks like a creative performance difference might be a difference in channel audience composition. The test is measuring noise rather than the creative signal.

70% of enterprise CMOs are prioritizing brand advertising in 2025. The teams scaling fastest are not the ones with the largest creative budgets. They are the ones that have industrialized creative learning, treating every ad flight as a structured experiment that feeds into the next production decision rather than a standalone creative statement.

Gartner's 2025 CMO Spend Survey found that 59% of CMOs report their current allocations are insufficient to meet strategic goals. In that environment, the ability to test rapidly and reallocate to winners is not a process improvement but a competitive necessity.

Connecting Creative Testing to Brand Lift Measurement: The Missing Link for Performance Marketers

Performance marketers are trained to trust click-through rates and ROAS. Brand creative does not optimize for those metrics. It builds purchase intent, brand recall, aided awareness, and high-intent traffic. The measurement framework has to match the creative objective, or the testing produces conclusions that are actively misleading.

Proper brand advertising creative testing connects variant performance to full-funnel outcomes: site visit behavior, time-to-purchase, pipeline entry, and ultimately revenue. A creative variant should not be declared a winner based on CTR. It should be declared a winner based on whether the people exposed to it converted at a higher rate than those exposed to a control, as measured through an incrementality study.

PSA holdout studies and UTM lift analysis isolate which creative variant caused behavior change versus what would have happened organically. This distinction is what converts creative testing from a marketing exercise into a CFO-ready proof of production budget allocation. Without a control group, every performance data point is ambiguous, unable to be separated from market conditions, seasonality, or paid search activity running simultaneously.

Nielsen's brand lift measurement research confirms that the measurement methodology must match the creative objective. Surveys measuring recall and purchase intent capture different signals than click-stream analysis, and both are necessary to fully attribute creative variant performance across a brand advertising campaign.

For performance marketers transitioning to upper-funnel brand strategy, the measurement discipline remains the same:

Form a hypothesis.

Define the metric before the test runs.

Hold out a control group.

Measure downstream, not just the immediate response.

The creative variables are different from bid variables, but the experimental rigor is identical.

What the Best Enterprise Brand Teams Are Building Now: A Continuous Creative Engine

The leading organizational change in 2025 is not a new channel or a new platform. It is a structural shift from the annual creative campaign model to a persistent testing infrastructure in which every ad is a real-time experiment, and the creative library compounds over time.

This requires three specific changes to how enterprise brand teams operate.

First, creative briefs must lead with persona, not campaign. A campaign-first brief produces creative designed to execute a concept. A persona-first brief produces creative designed to resonate with a specific audience at a specific stage of their buying journey. The brief specifies which of the six levers is being tested, the hypothesis, and the measurement outcome that will determine the winner.

Second, variant production must be channel-agnostic. Creative developed for CTV only cannot be efficiently tested against the same persona on native or audio. Channel-agnostic variant production builds assets that can be deployed across the full open internet simultaneously, which is where 61% of online time is spent, outside social and search.

Third, measurement science must be built into the launch plan, not added after the campaign ends. The pixel map, the holdout design, the KPI definition, and the incrementality study framework must exist before a single ad impression is purchased. Post-campaign measurement cannot reconstruct a proper control group.

The competitive advantage this builds is cumulative and proprietary. Brands 18 months into continuous creative testing possess a dataset of which emotional themes, value propositions, and visual systems drive conversion for each of their personas. No agency can replicate that dataset. No competitor can access it. It accrues exclusively to the brand that built the testing infrastructure and ran the experiments.

McKinsey's analysis of performance branding identifies exactly this compounding effect as the core value driver of brands that integrate rigorous measurement into creative development. The brands winning in the long term treat creative testing the same way performance teams treat paid search bid optimization: systematically, hypothesis-driven, and connected to downstream revenue.

How Precision Brand Advertising Closes the Creative Testing Gap

Most enterprise creative testing fails at a specific point: the inability to reach the same persona across channels simultaneously. Without a unified audience, a creative test conflates channel performance with creative performance. The result is ambiguous data that cannot justify production decisions.

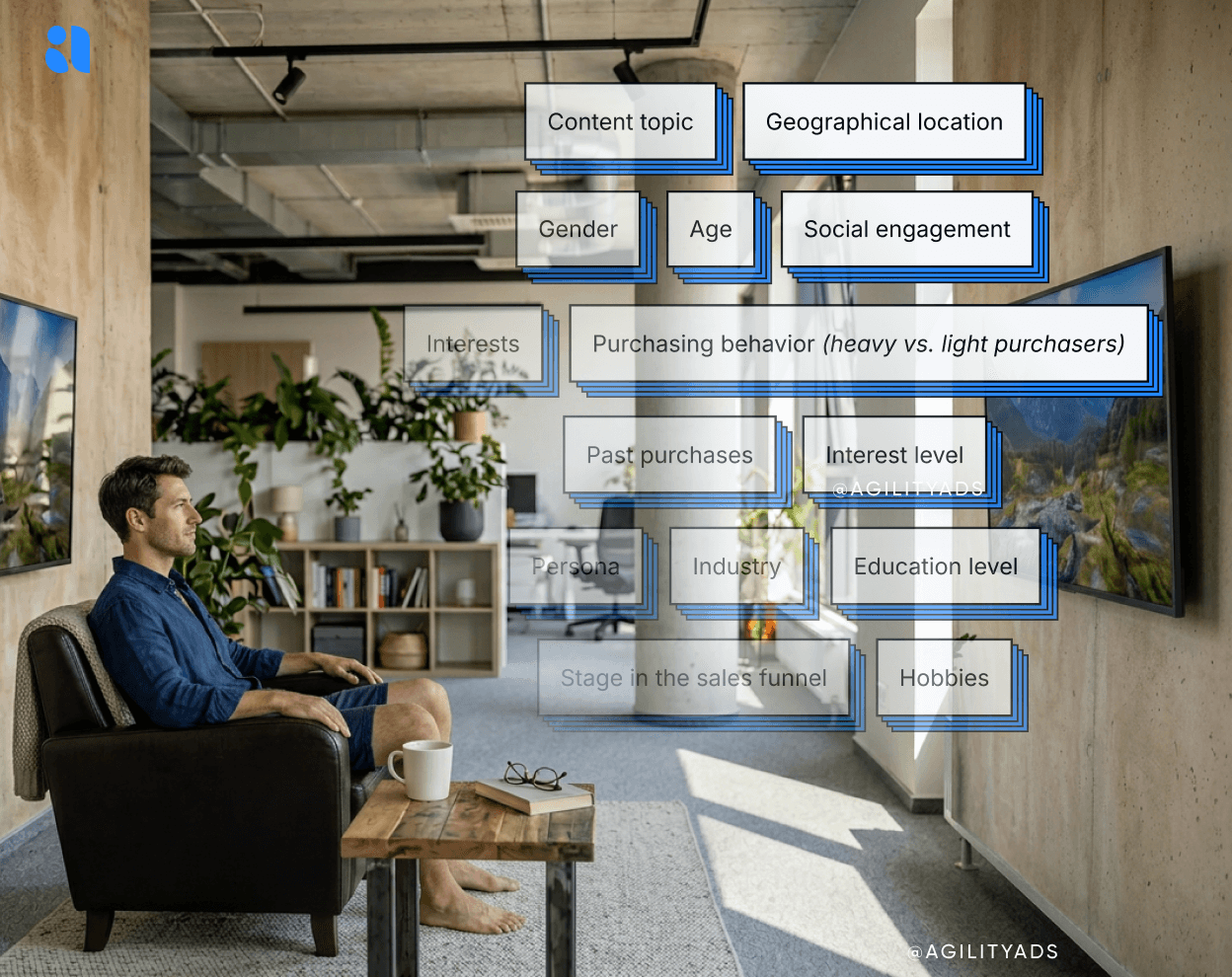

Agility's platform addresses this directly through four integrated capabilities. Persona strategy engineering builds multidimensional audience profiles using geofencing, behavioral, retail, and credit card data drawn from 38+ geo-location data sources, creating the precise audience foundation that brand advertising creative testing requires. Media buying across CTV, native, audio, and display then reaches that same persona across all channels simultaneously, so creative variants are tested against a consistent audience, not a different mix of people on each platform.

Precision creative testing applies the six high-impact levers, including value proposition, emotional theme, and talent, as real-time experiments across persona and funnel stage. Measurement science then tracks variant performance through the full buyer journey, from identity resolution to site visit to pipeline entry, using PSA and incrementality studies to isolate creative causation from organic behavior.

The results compound over time. One national fitness brand running this model generated $11.2M in incremental revenue and a 45% reduction in CPA across six brands. A multi-brand retailer saw 108% revenue growth at third-party distributors. Binet & Field's IPA Databank research indicates that 60% of sales come from long-term brand effects, meaning these are not awareness metrics in isolation. They are revenue metrics tied directly to which creative variants were promoted and which were killed early, compounding toward the $6 return for every $1 invested in brand advertising that the research supports.

See what precision brand advertising looks like for your brand at agilityads.com/test-precision-advertising.

Frequently Asked Questions

Q: What is creative testing, and how is it different from standard A/B testing?

Brand advertising creative testing is a structured experimental framework for measuring which creative variables, including value proposition, emotional theme, talent, messaging, CTA, and imagery, drive downstream brand and revenue outcomes for specific audience personas. Standard A/B testing typically measures immediate response metrics, such as click-through rate, against a single variable. Brand advertising creative testing measures full-funnel impact, from brand recall and purchase-intent lift through pipeline contribution, using holdout groups and incrementality studies to isolate creative causation. Kantar's research confirms that top-performing creative generates more than 4x the profit of average creative, a gap that only systematic testing can close.

Q: How do I measure whether a creative variant actually caused a lift in purchase intent, not just correlated with it?

The methodology that isolates creative causation is a PSA holdout study: a matched control group sees a public service announcement instead of your creative, and the performance difference between the exposed and unexposed groups is attributed to the creative itself. Incrementality testing frameworks can then quantify the lift in purchase intent, the growth in high-intent traffic, and the differences in downstream conversion rates with statistical confidence. Agility's measurement science combines PSA holdout, UTM lift, and journey tracking to give CFO-ready proof of which creative variants drove business outcomes.

Share in...